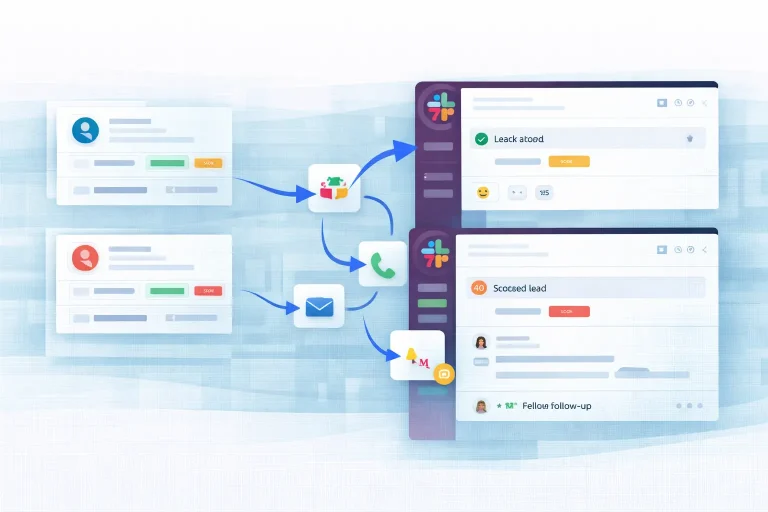

Lead Qualification Slack Workflow Template

This template is based on a real n8n workflow and is designed for lead qualification. It connects the source steps,...

Discover ready-to-use automation templates, tools, and learn how to build AI workflows for lead generation, content, research, operations, and more.

Browse ready-to-use workflow templates for common AI and automation use cases, from lead generation and research to content operations and productivity.

Explore the platforms, APIs, AI models, and data tools used to build, run, and scale practical automation workflows.

Read tutorials, comparisons, workflow ideas, and implementation guides to help you choose the right setup and build better automations.

Start with curated workflow templates for high-value use cases across lead generation, content automation, research, marketing, and productivity.

This template is based on a real n8n workflow and is designed for lead qualification. It connects the source steps,...

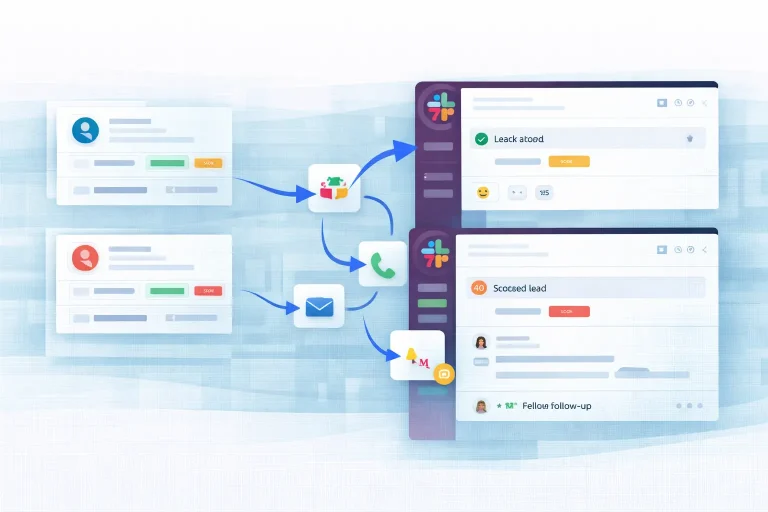

This template is based on a real n8n workflow and is designed for support ticket routing. It connects the source...

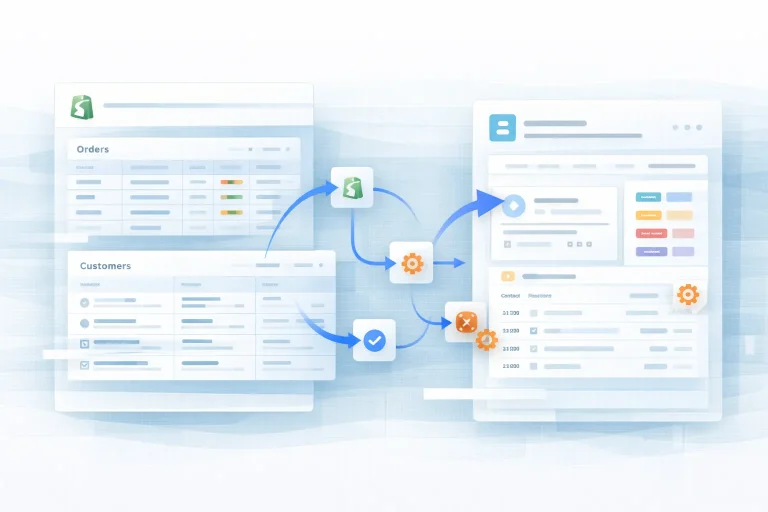

This template is based on a real n8n workflow and is designed for shopify crm sync. It connects the source...

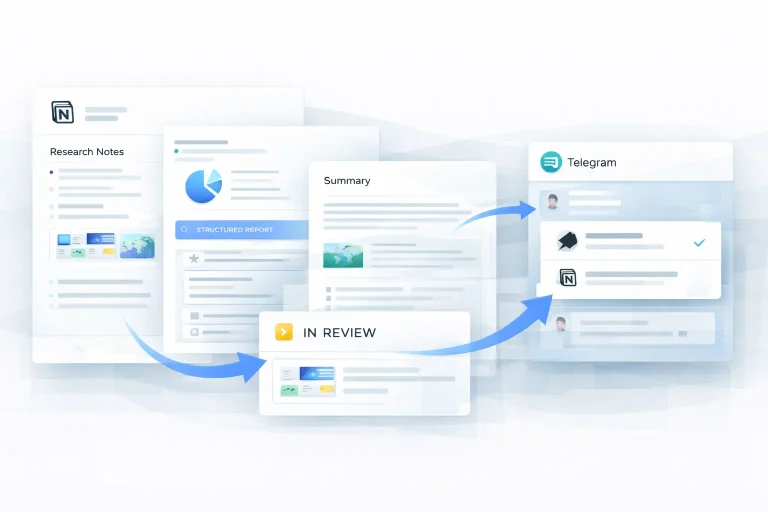

This template is based on a real n8n workflow and is designed for research report generation. It connects the source...

This template is based on a real n8n workflow and is designed for content workflow automation. It connects the source...

This template is a full social publishing pipeline rather than a single post generator. It creates platform-specific copy, optional visuals,...

Explore workflow templates by business function, from lead generation and content automation to research, support, and operations.

Create workflows for content generation, publishing, and repurposing.

7 templatesStreamline support workflows, triage, and response operations.

2 templatesMove, clean, and report business data through structured automations.

5 templatesBuild prospecting, enrichment, and outbound workflow templates.

11 templatesRun campaign, lead nurture, and marketing operations automations.

4 templatesSupport internal workflows, task routing, and operational processes.

2 templatesAutomate collection, summarization, and structured research tasks.

8 templatesAutomate sales handoffs, CRM updates, and pipeline processes.

4 templatesCompare the automation platforms, AI tools, APIs, and knowledge systems commonly used in modern AI workflows.

n8n is a workflow automation platform for technical teams that want flexible integrations, API-driven processes, self-hosted deployment, and stronger support for internal tools and AI workflows.

Zapier is a no-code workflow automation platform that helps users connect apps and automate repetitive tasks across thousands of tools. It is widely used for app integrations, business process automation, and AI-powered workflows. It is especially suitable for teams that want to build automation quickly without managing infrastructure, making it a common choice for marketing, sales, and operations workflows.

Make is a no-code workflow automation platform that enables users to design, build, and automate workflows across apps and APIs using a visual interface. It is widely used for complex automation scenarios, data processing, and multi-step workflows.

OpenAI is an AI platform that provides large language models and APIs for building AI-powered applications and workflows. It is widely used for text generation, reasoning, and automation systems. It is especially suitable for developers and teams building AI workflows and applications.

Pipedream lets teams automate workflows, connect APIs, and embed integrations in products with managed auth, code steps, SDKs, and REST APIs.

OpenManus is an open-source AI agent framework for developers who want to build, run, and extend general-purpose agents with tool use, model APIs, and self-hosted control.

Read practical tutorials, tool comparisons, workflow ideas, and best practices for building more effective automations.

This guide explains what an AI agent actually is, how it differs from simpler chat or automation setups,...

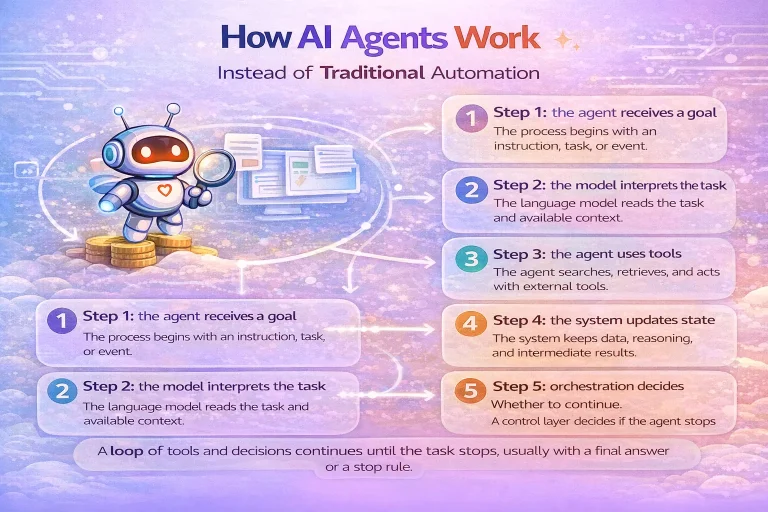

This guide breaks down how AI agents work at an operational level so you can understand the model,...

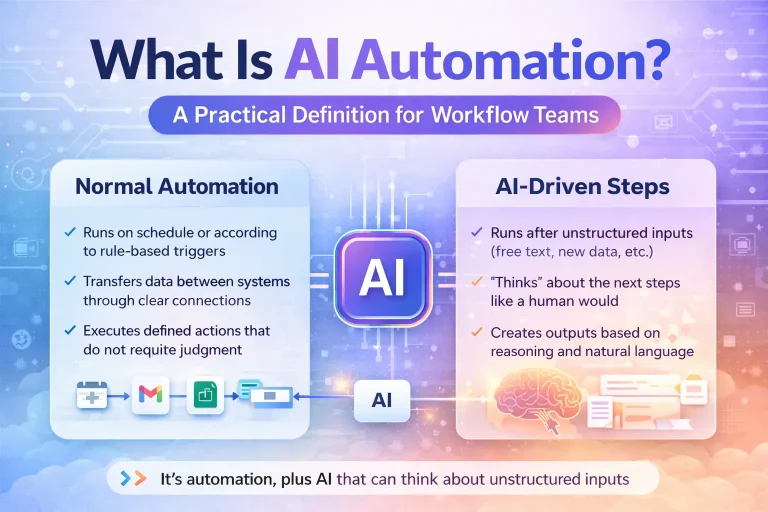

This guide explains what AI automation actually means for workflow teams, not just in theory but in day-to-day...

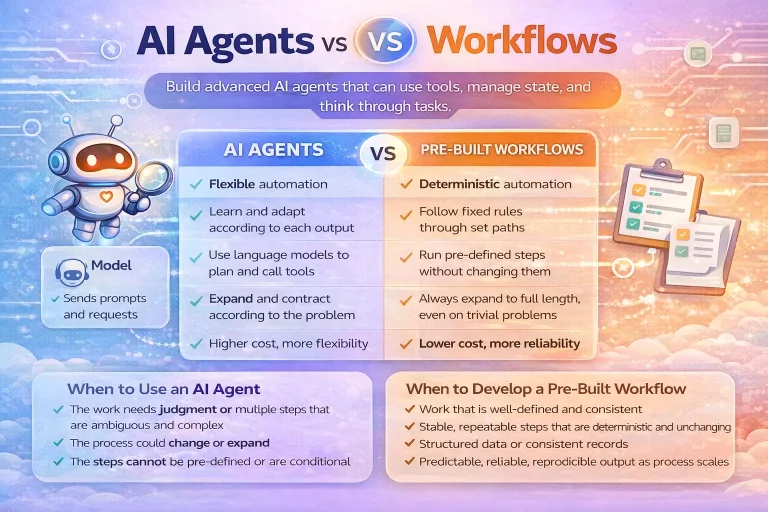

This guide compares AI agents and workflows from an implementation perspective rather than a hype perspective. It focuses...

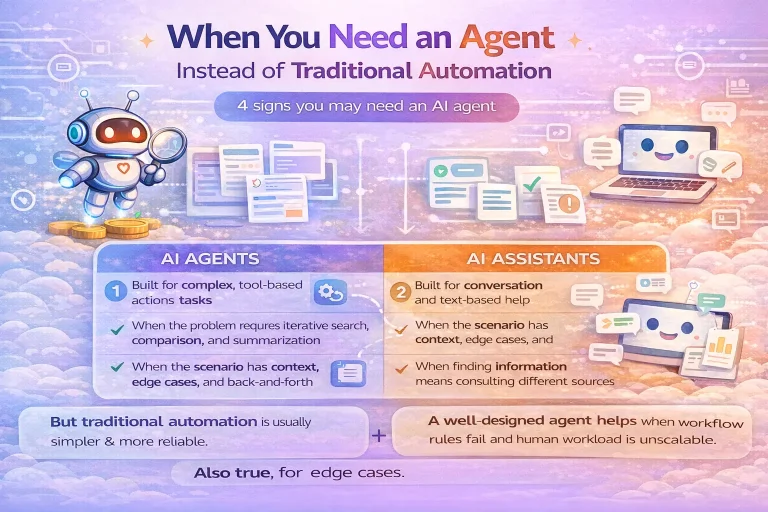

This guide explains when an AI agent is the right architectural choice and when a simpler workflow, routing...

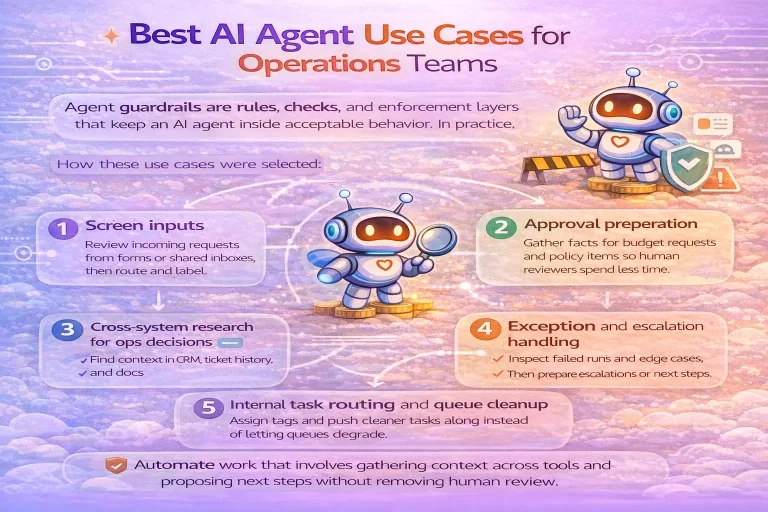

This guide covers the most practical AI agent use cases for operations teams, how to evaluate them, and...

Use WorkflowLibrary.ai to find workflow templates, compare tools, and learn how to create smarter automations for real-world use cases.