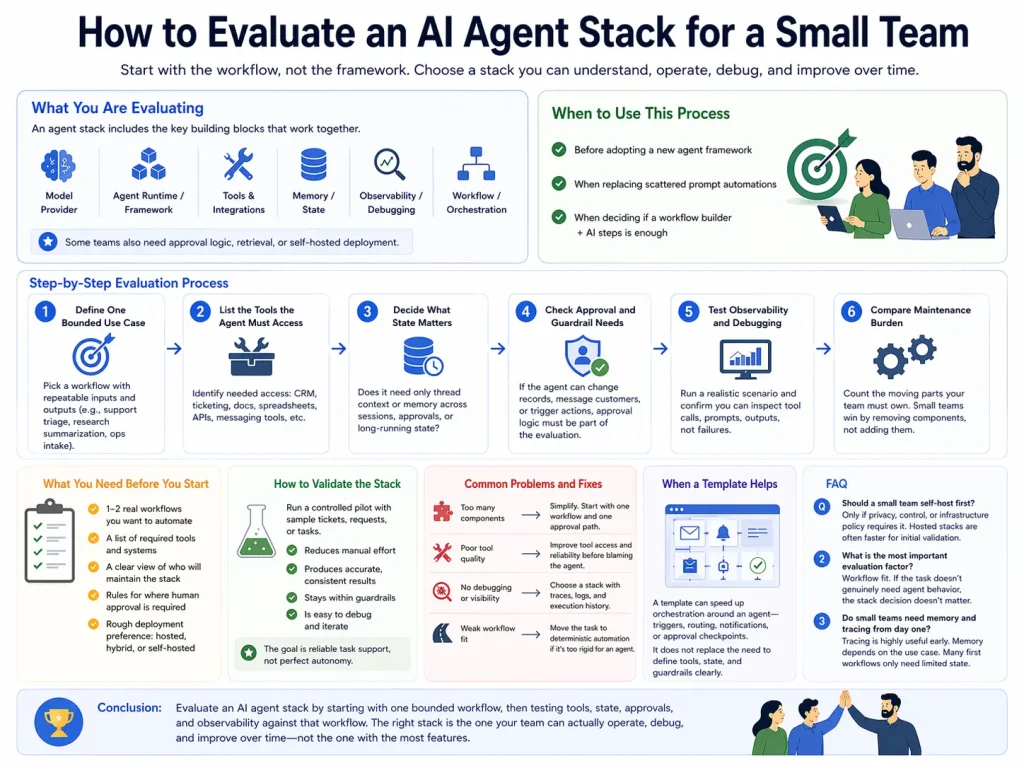

How to Evaluate an AI Agent Stack for a Small Team

A small team should evaluate an AI agent stack by checking whether the stack matches its workflow needs, maintenance capacity, approval requirements, and tool surface.

This guide shows how a small team can evaluate an AI agent stack without overbuying complexity or choosing tools it cannot realistically operate.

Related Tools

Details

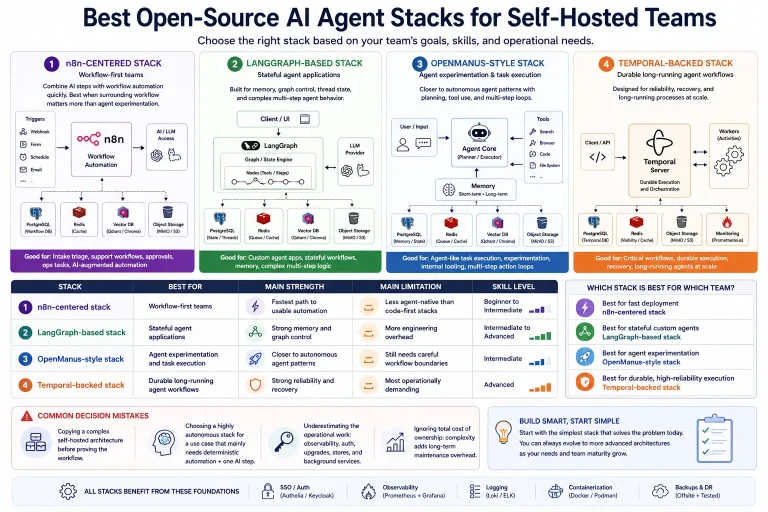

To evaluate an AI agent stack for a small team, start with the workflow you want to run, not the framework you want to buy. A small team usually needs a stack that is understandable, maintainable, and narrow enough to operate without a dedicated platform group.

The right stack is rarely the one with the most features. It is the one that gives you enough control over tools, state, approvals, and debugging without forcing your team to maintain an oversized architecture.

What you are evaluating

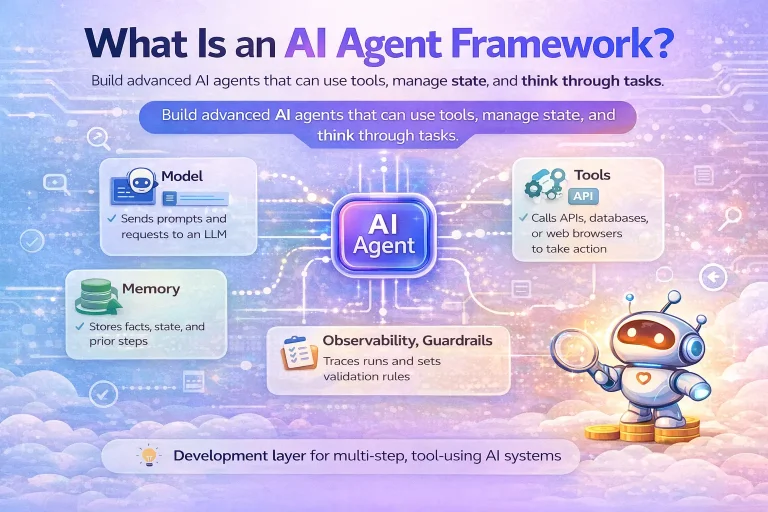

An agent stack usually includes a model provider, an agent runtime or framework, tool access, memory or state, observability, and a surrounding workflow or orchestration layer. Some teams also need approval logic, retrieval, or self-hosted deployment.

When to use this evaluation process

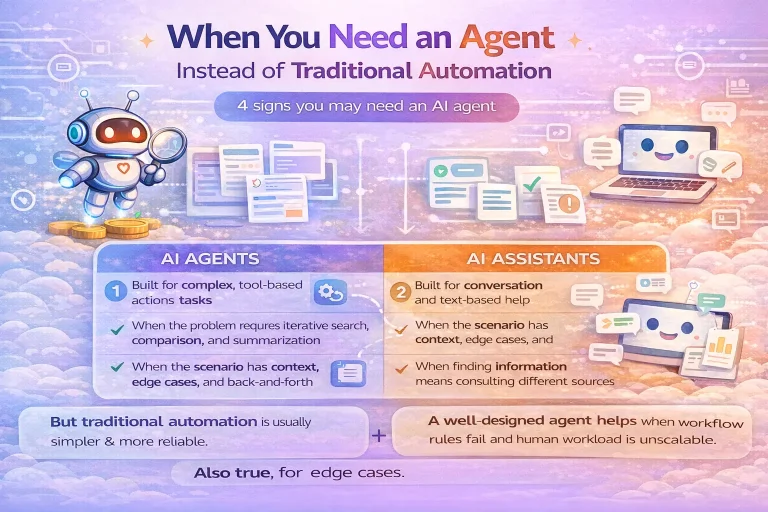

Use this process before adopting a new agent framework, when replacing scattered prompt automations, or when deciding whether a workflow builder plus AI steps is already enough.

What you need before you start

- One or two real workflows you want to automate

- A list of required tools and systems

- A clear view of who will maintain the stack

- Rules for where human approval is required

- A rough deployment preference: hosted, hybrid, or self-hosted

Step-by-step evaluation process

Step 1: Define one bounded use case

Pick a workflow with repeatable inputs and outputs, such as support triage, research summarization, or ops intake. Avoid evaluating on a vague goal like “build an AI teammate.”

Step 2: List the tools the agent must access

Identify whether the system needs CRM reads, ticketing access, docs retrieval, spreadsheets, internal APIs, or messaging tools. This often determines whether a workflow builder, an agent framework, or both are needed.

Step 3: Decide what state matters

Check whether the workflow only needs thread context or whether it needs memory across sessions, approvals, or long-running execution state.

Step 4: Check approval and guardrail needs

If the agent can change records, message customers, or trigger account actions, approval logic must be part of the evaluation. Small teams should strongly prefer stacks where approval is easy to add.

Step 5: Test observability and debugging

Run one realistic scenario and confirm that you can inspect tool calls, prompts, outputs, and failure points. If you cannot debug the run, the stack will become expensive to maintain later.

Step 6: Compare maintenance burden

Ask how many moving parts the team must own: hosted model, framework runtime, vector store, memory store, background jobs, auth, and deployment. Small teams usually win by removing components, not adding them.

How to validate the stack

A good first validation is a controlled pilot with sample tickets, requests, or tasks. Measure whether the stack reduces manual effort without creating opaque failures or unsafe actions. The goal is not perfect autonomy. The goal is reliable task support.

Common problems and fixes

- Too many components: remove optional layers and start with one workflow plus one approval path.

- Poor tool quality: improve tool access before blaming the agent.

- No debugging: choose a stack with traces or execution history.

- Weak workflow fit: move the task back into deterministic automation if the workflow is too rigid for an agent.

When a template helps

A template can speed up the orchestration around an agent, such as triggers, routing, notifications, or approval checkpoints. It does not remove the need to define tools, state, and guardrails clearly.

FAQ

Should a small team self-host first?

Only if privacy, control, or infrastructure policy requires it. Hosted stacks are often faster for initial validation.

What is the most important evaluation factor?

Workflow fit. If the task does not genuinely need agent behavior, the stack decision does not matter.

Do small teams need memory and tracing from day one?

Tracing is highly useful early. Memory depends on the use case. Many first workflows only need limited state.

Conclusion

A small team should evaluate an AI agent stack by starting with one bounded workflow, then testing tools, state, approvals, and observability against that workflow. The right stack is the one your team can actually operate, debug, and improve over time.