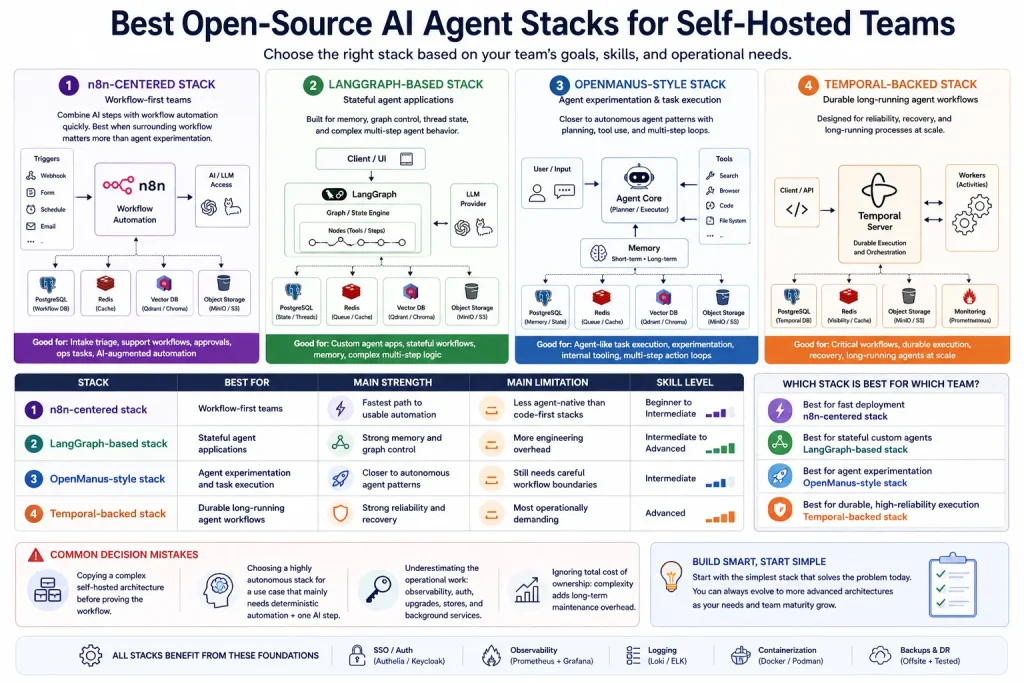

Best Open-Source AI Agent Stacks for Self-Hosted Teams

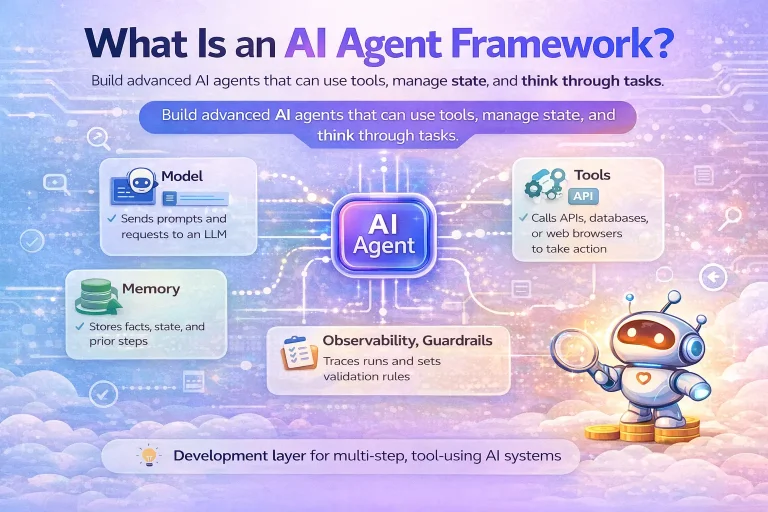

The best open-source AI agent stack depends on whether your team prioritizes workflow orchestration, agent experimentation, durable execution, or a full self-hosted AI workbench.

This guide compares the best open-source AI agent stacks for self-hosted teams and helps you choose based on control, complexity, and operational fit.

Related Tools

Details

The best open-source AI agent stack for a self-hosted team depends on what “stack” means in your environment. If you want a practical workflow-first stack, n8n plus model access and supporting stores is one of the easiest self-hosted starting points. If you want deeper agent orchestration, LangGraph-based stacks or OpenManus-style setups are stronger. If reliability and long-running execution matter most, a stack that includes durable orchestration becomes more compelling.

There is no single best stack for every self-hosted team because the tradeoff is always between setup speed and control. Small teams usually need less architecture than they think.

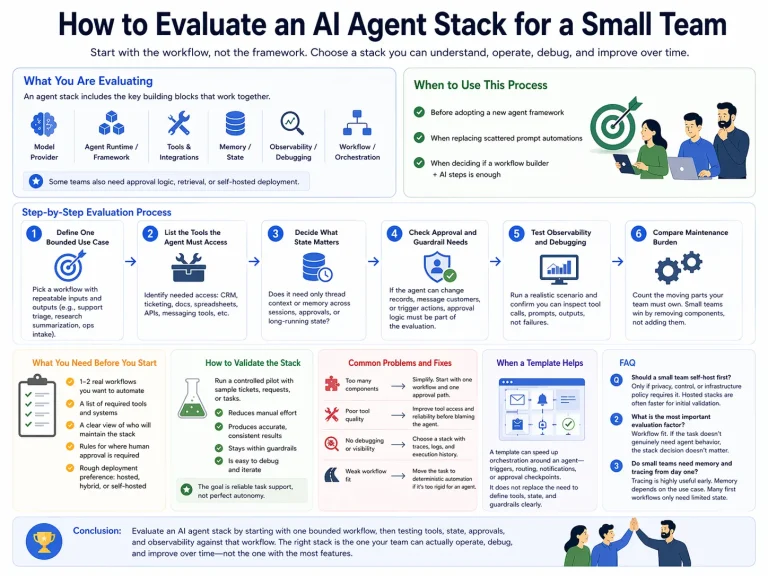

How the stacks were selected

The stacks in this guide were chosen based on self-hostability, open-source availability, practical workflow fit, observability, and how well they support real tool-using agent behavior. The goal is not to crown one winner. It is to help you choose the right level of complexity.

Summary comparison table

| Stack | Best for | Main strength | Main limitation | Skill level |

|---|---|---|---|---|

| n8n-centered stack | Workflow-first teams | Fastest path to usable automation | Less agent-native than code-first stacks | Beginner to Intermediate |

| LangGraph-based stack | Stateful agent applications | Strong memory and graph control | More engineering overhead | Intermediate to Advanced |

| OpenManus-style stack | Agent experimentation and task execution | Closer to autonomous agent patterns | Still needs careful workflow boundaries | Intermediate |

| Temporal-backed stack | Durable long-running agent workflows | Strong reliability and recovery | Most operationally demanding | Advanced |

1. n8n-centered self-hosted stack

This stack is best for teams that want to combine AI steps with workflow automation quickly. It works well for intake triage, support workflows, agent-assisted ops tasks, and human approval flows. It is the best choice when the surrounding workflow matters more than agent experimentation.

2. LangGraph-based stack

LangGraph-based stacks are better when memory, graph control, thread state, and long-running multi-step behavior matter. They are stronger for developers building agent applications that need explicit state management and more custom behavior.

3. OpenManus-style stack

This type of stack is useful for teams exploring more agent-like task execution and multi-step action loops. It fits experimentation and internal tooling well, but it still benefits from surrounding workflow and approval layers.

4. Temporal-backed agent stack

If your self-hosted team cares about durable execution, recovery, and long-running processes, a Temporal-backed stack has a real advantage. It is not the easiest starting point, but it is often the right one for critical systems.

Which stack is best for which team?

- Best for fast deployment: n8n-centered stack

- Best for stateful custom agents: LangGraph-based stack

- Best for agent experimentation: OpenManus-style stack

- Best for durable, high-reliability execution: Temporal-backed stack

Common decision mistakes

One mistake is copying a complex self-hosted architecture before proving the workflow. Another is choosing a highly autonomous stack for a use case that mainly needs deterministic automation plus one AI step. Self-hosted teams should also be realistic about operations: observability, auth, upgrades, stores, and background services all add work.

FAQ

What is the easiest self-hosted stack to start with?

A workflow-first stack is usually the easiest because it gives you visible orchestration and fewer moving parts.

Which stack is best for memory-heavy agents?

LangGraph-based stacks are often stronger when persistent state and memory design are central.

Should small teams use Temporal from day one?

Only when long-running, failure-sensitive execution is central to the product or process.

Conclusion

The best open-source AI agent stack for a self-hosted team depends on what you are optimizing for: speed, control, experimentation, or durability. Most teams should start with the smallest stack that can support their first real workflow, then add more infrastructure only when the workflow demands it.