AI Agents vs Assistants

A practical comparison of AI agents and assistants, focused on execution depth, user control, and workflow fit.

This guide compares AI agents and assistants in plain terms so you can decide which model fits your product or workflow. It focuses on interaction, autonomy, tool use, and real implementation tradeoffs.

Related Tools

Details

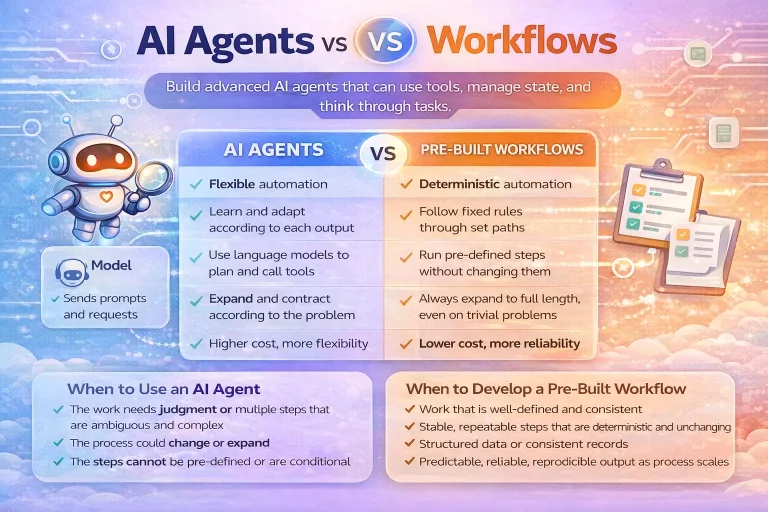

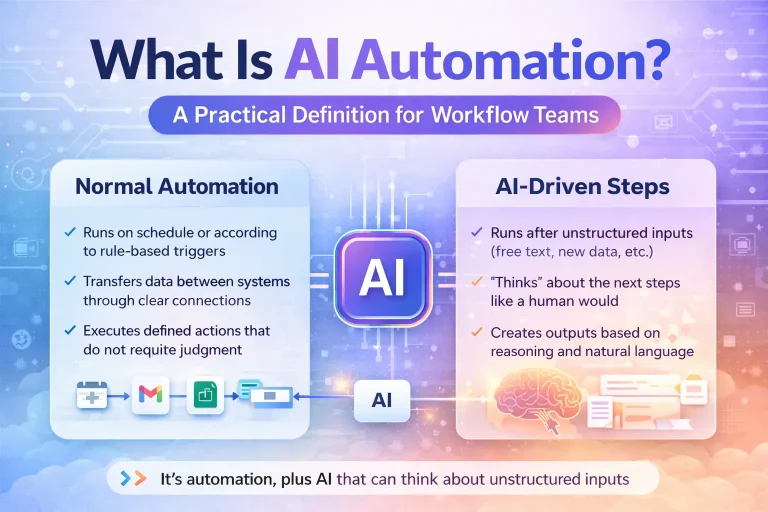

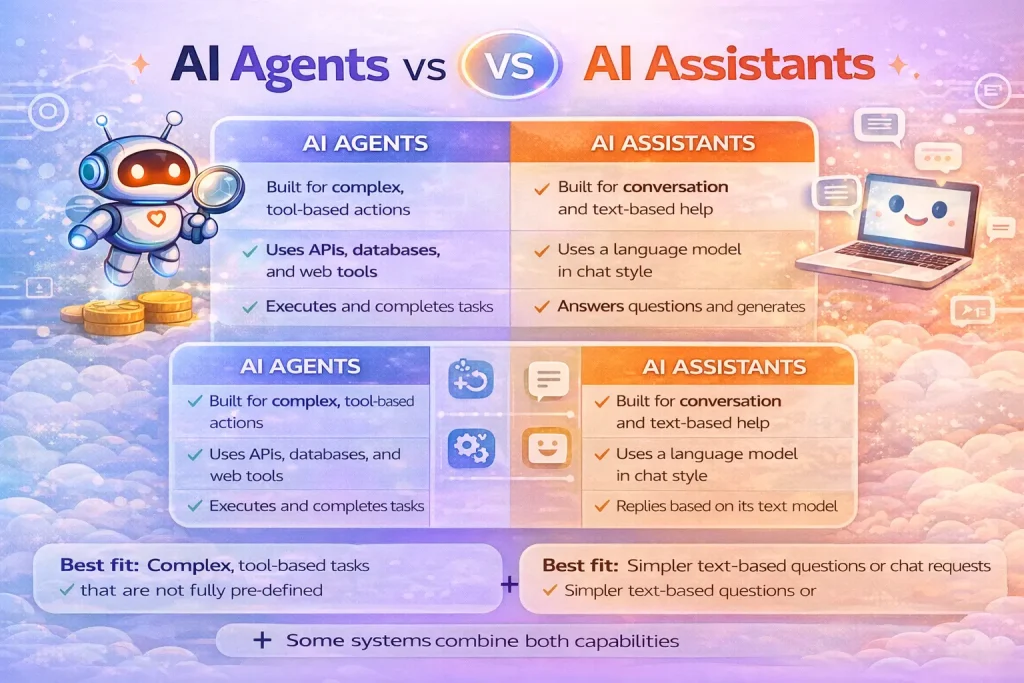

AI agents and assistants overlap, but they are not the same thing. An assistant is usually designed around direct interaction with a user. It answers questions, follows instructions, and may use tools during that conversation. An agent is broader: it is designed to work through a goal, often with more autonomy, multiple steps, and structured orchestration behind the scenes.

If you want a fast verdict, choose an assistant when the main experience is conversational help. Choose an agent when the system must carry out a task across tools, state, and decision points with less hand-holding from the user.

What each term usually means

An assistant is generally a user-facing AI helper. It may live inside chat, voice, support, or productivity interfaces. The user stays in the loop continuously and the interaction itself is the primary product experience.

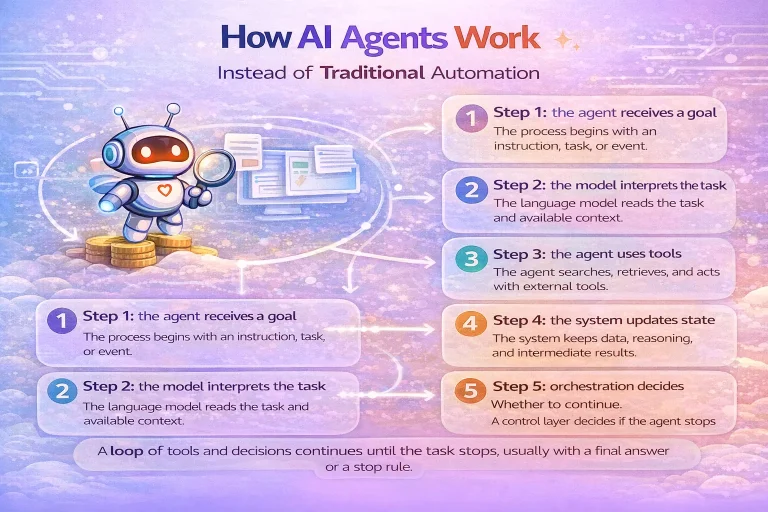

An agent is usually a task-execution system. It may still speak to the user, but its job is not only to converse. It must gather context, choose actions, use tools, and complete work across one or more steps.

Quick comparison table

| Option | Best for | Main strength | Main limitation | Skill level |

|---|---|---|---|---|

| Assistant | Interactive user help | Simple conversational UX | May stop at advice instead of action | Beginner |

| Agent | Multi-step task execution | Can act across tools and workflows | Needs more orchestration and safeguards | Intermediate |

The main difference

The main difference is execution depth. Assistants are centered on interaction. Agents are centered on task completion. A user may ask both to “help with a support issue,” but the assistant is likely to explain or draft, while the agent may retrieve account context, classify urgency, consult policy, prepare a reply, and request approval before sending.

Which one is easier to build

Assistants are usually easier to ship because the scope is narrower. You can start with chat, a few tools, and clear instructions. The user remains the primary coordinator, so the system does not need as much autonomy or runtime control logic.

Agents are harder because once the system acts more independently, you must define tool permissions, stopping rules, approval points, observability, and fallback behavior. That additional infrastructure is the real cost of agentic behavior.

Which one is more flexible

Agents are more flexible for multi-step work because they can continue through a task instead of waiting for the user after every move. That makes them stronger for research, triage, analysis, and workflow execution.

Assistants are more flexible at the experience layer. They fit customer support chat, internal help desks, writing aids, and other environments where the user wants ongoing back-and-forth rather than a hidden execution engine.

Best fit by use case

Choose an assistant when:

- The user wants explanations, suggestions, or drafting help in chat

- The interaction is short and mostly turn-based

- You want minimal automation risk and continuous user supervision

- The product value is the conversation itself

Choose an agent when:

- The system must complete work across several steps

- It needs to search tools or data sources before acting

- You want less back-and-forth and more outcome-oriented behavior

- The workflow includes approvals, handoffs, or complex tool use

Where people get confused

The confusion usually comes from product naming. Many assistants now have agent-like capabilities, and many agents still present themselves through a conversational interface. The boundary is not the chat window. The boundary is whether the system is primarily there to interact or primarily there to execute.

Another mistake is assuming assistants are “less advanced.” That is not always true. A well-designed assistant may be the better product choice if strong user control matters more than autonomous task execution.

Templates and implementation reality

If your use case is already a structured business process, a workflow template plus a small assistant layer may be enough. For example, a support drafting assistant can sit on top of a workflow that gathers ticket metadata and account details. You do not need a fully agentic system just because a model is involved.

By contrast, if the system must decide which sources to query, when to escalate, or which workflow to trigger, you are moving into agent territory and should design for safeguards from the start.

FAQ

Can an assistant be an agent?

Yes. Some products combine both. The interface may look like an assistant while the backend uses agentic orchestration to complete tasks.

Are agents always autonomous?

No. Many useful agents operate with human approval steps and narrow tool permissions. Autonomy is a spectrum, not an all-or-nothing property.

Which is better for internal enterprise tools?

It depends on the job. Internal Q&A often fits an assistant. Internal task execution, triage, and multi-system operations more often fit an agent.

Conclusion

Assistants are best understood as interaction-first AI systems. Agents are task-first AI systems. They can overlap, but the design question is different: do you mainly need a conversation, or do you mainly need work to get done across tools and steps?