What Is DeerFlow and When Should You Use It for Deep Research

A practical introduction to DeerFlow, how it works, and where it fits in a real research workflow.

DeerFlow is an open-source research agent framework built for web research, tool use, and report generation. This guide explains what it does, how it is structured, and when it makes more sense than a simple chat interface.

Related Tools

Details

DeerFlow is one of a growing number of open-source systems built around the idea of a research agent rather than a single chat window. At a basic level, it gives a language model a structured workflow, access to tools, and a way to turn a vague question into a clearer plan, gather material, and produce a longer output such as a report, presentation, or audio summary.

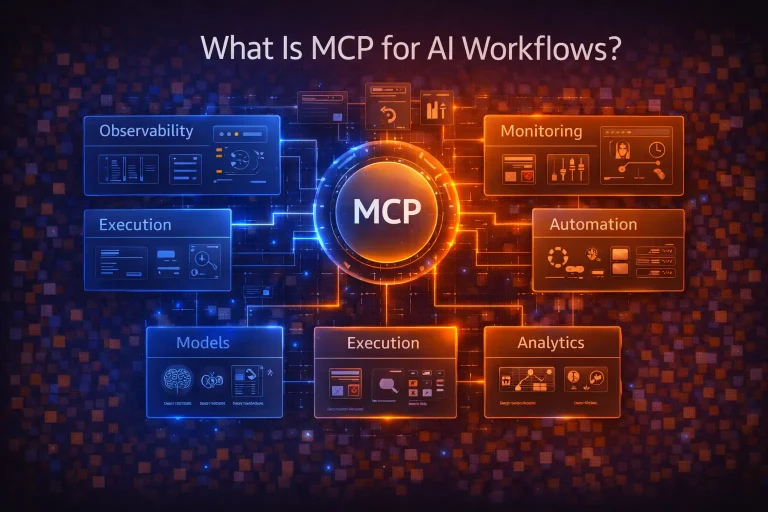

The public project describes DeerFlow as a community-driven deep research framework from ByteDance’s open-source effort, with support for search, crawling, Python execution, MCP integration, and report generation.

That description matters because a lot of people first encounter DeerFlow by seeing it grouped together with “AI agents,” “deep research,” or “Manus-like” tools. Those labels are directionally right, but they hide the practical question: what is DeerFlow actually good at, and who should spend time setting it up?

What DeerFlow Is

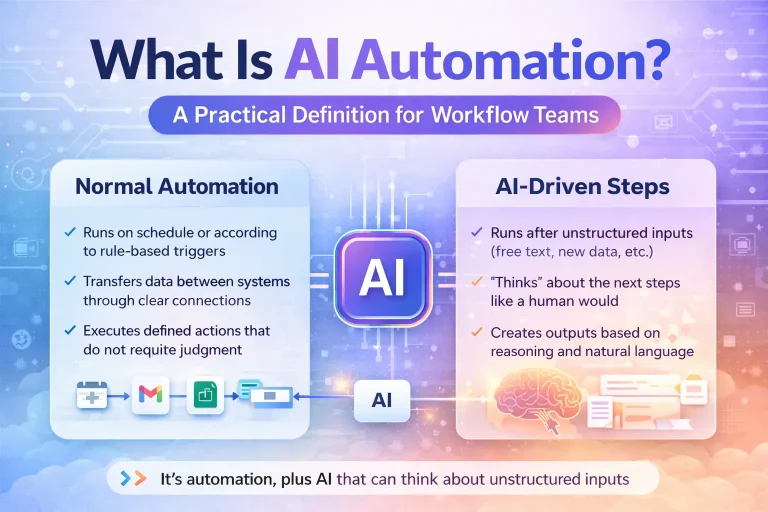

DeerFlow is an open-source framework for multi-step research tasks. Instead of asking a model one question and getting one answer, you give the system a topic and let it plan, search, inspect sources, run code when needed, and assemble a more structured result. Its public documentation and repository describe a modular workflow built on LangGraph, with roles such as coordinator, planner, researcher, coder, and reporter.

In practice, that means DeerFlow sits somewhere between a chat assistant and a custom automation stack. It is more structured than asking ChatGPT to “research this topic,” but less like a traditional workflow builder such as n8n or Make. The unit of work is not a static automation recipe. It is an evolving research job with planning, feedback, retrieval, and synthesis.

That makes DeerFlow especially relevant for teams or solo operators who need repeatable research output: market scans, technical comparisons, document-grounded reports, topic briefings, or analyst-style summaries that need more than one search query and more than one pass.

How DeerFlow Works

The core public architecture is straightforward. DeerFlow starts with a coordinator that manages the overall run, passes the task to a planner that breaks down the problem, then hands work to specialist components for research or coding, and finally compiles the result in a reporting stage. The repository also notes a human-in-the-loop planning flow, which lets the user refine or accept the research plan before execution continues.

1. Planning first, not just answering

One of the useful parts of DeerFlow is that it does not treat every prompt as a direct-answer problem. For broad or fuzzy requests, it can ask clarifying questions and shape a research plan first. According to the project materials, this clarification layer is meant to improve precision, reduce wasted searching, and lower unnecessary token usage.

That is a better fit for research than the common “single prompt, single response” pattern. If the original brief is weak, the final report is usually weak too. DeerFlow tries to fix that early in the process.

2. Tool-backed retrieval

DeerFlow is not limited to a model’s built-in knowledge. The project documents support for several web search options, including Tavily, Brave Search, DuckDuckGo, Arxiv, Searx or SearxNG, and BytePlus InfoQuest. It also supports crawling tools such as Jina and InfoQuest.

This matters because “research” usually breaks down at the retrieval layer. A model can write fluent text, but if the inputs are weak, stale, or too shallow, the result still looks polished while missing the point. DeerFlow’s value is that it is designed around retrieval and source gathering rather than treating those as an afterthought.

3. Code execution when needed

The repository also highlights Python execution as part of the workflow. That gives DeerFlow a path for basic analysis tasks such as data cleanup, comparison logic, or small calculation steps during a research run.

Not every research workflow needs code, but when you do need it, it is useful to keep analysis inside the same run instead of moving between five separate tools.

4. Structured outputs

DeerFlow is not just aimed at raw notes. The public materials describe report generation, post-editing, podcast output, and simple presentation generation. The official site also says DeerFlow 2.0 is moving from a deep research agent toward a broader “super agent” model, adding ideas such as memory, long task running, planning and sub-tasking, sandboxed file operations, and broader model support.

That does not mean every user needs DeerFlow 2.0 features on day one. But it does show the direction of the project: not just researching a topic, but handling a longer chain of agent work around that topic.

What DeerFlow Is Good For

DeerFlow makes the most sense when the task has three traits:

- the question is broad enough that planning matters

- fresh external information is needed

- the final output should be more structured than a casual chat reply

Typical examples include:

- competitive research across multiple companies or products

- topic briefings that need source collection and synthesis

- technical landscape reports that mix web results and code checks

- research workflows that pull from private knowledge bases as well as the open web

- repeatable analyst-style reports for internal teams

The repository’s documented support for private knowledge sources such as RAGFlow, Qdrant, Milvus, VikingDB, MOI, and Dify is relevant here. That means DeerFlow is not only for public web research; it can also be pointed at internal material if you wire the retrieval layer correctly.

Where DeerFlow Is Not the Best Fit

It is easy to overuse research agents. DeerFlow is not the right answer for every workflow.

Simple automations

If your real task is “watch for a form submission and push data into Airtable,” DeerFlow is probably the wrong tool. A workflow platform such as n8n, Make, or a direct script is usually simpler and easier to maintain.

Low-context chat

If you only need a quick answer, a chat model is faster. DeerFlow adds planning, tool calls, and execution overhead. That overhead is worth it when the task is messy or high-context. It is unnecessary when the task is small.

Non-technical users who want zero setup

The open-source repo currently documents local setup with Python 3.12+, Node.js 22+, uv, and optional web UI dependencies. That is reasonable for developers, but it is still setup work. There is an official site and hosted experience path, yet self-hosting remains a more technical path than using a mainstream chat product.

Setup Considerations Before You Use DeerFlow

Model layer

DeerFlow’s public docs say it supports many models through LiteLLM and can work with OpenAI-compatible APIs as well as open models such as Qwen, depending on configuration. That flexibility is useful, but it also means output quality depends heavily on your model choice and configuration discipline.

If your report quality matters, do not treat the model layer as a minor detail. Planning quality, extraction quality, and final writing quality are all affected by model choice.

Search and crawling quality

DeerFlow can use several search backends, but those are not interchangeable in practice. Search quality, freshness, coverage, and extraction reliability vary a lot. For public web research, the search stack often matters nearly as much as the model stack.

Knowledge base integration

If your use case involves internal documents, the retrieval setup is where most of the real work lives. The framework can connect to vector stores and RAG systems, but you still need good chunking, retrieval settings, and source hygiene.

Review workflow

Even if DeerFlow produces a decent first draft, research output still benefits from human review. The planner can reduce wasted effort, but it does not replace editorial judgment. The more important the report, the more you should treat DeerFlow as a research assistant rather than an autonomous analyst.

Common Mistakes When Evaluating DeerFlow

Comparing it to the wrong tools

DeerFlow is often compared either to chatbot products or to rigid automation builders. Neither comparison is fully wrong, but neither is complete. The closer comparison is a research-oriented agent framework with tool use, planning, and multi-step execution.

Judging it from one demo

A demo can show that DeerFlow can produce a report. It does not show whether it fits your workflow. The better test is whether it handles your recurring research questions with fewer manual steps and more consistent structure.

Ignoring maintenance cost

Open-source agent systems are attractive because you can inspect and control them. The tradeoff is maintenance. Search providers change, model APIs change, and retrieval quality drifts over time. If you want a self-hosted research stack, budget for that reality.

When a Prebuilt DeerFlow Workflow or Template Makes Sense

If your team repeatedly runs the same kind of research job, a prebuilt template starts to make sense. That is especially true when the planning logic, search configuration, output structure, and review checkpoints are already known. Building from scratch each time is useful for exploration, but it is rarely the best long-term operating model.

For WorkflowLibrary.ai, that is where DeerFlow-related templates can become useful: not as a shortcut for beginners who want to avoid understanding the system, but as a faster starting point for recurring use cases such as market research, content research, technical comparisons, or document-grounded reporting.

Final Take

DeerFlow is worth paying attention to because it treats research as a workflow rather than a prompt. The project is open source, MIT-licensed, and already combines planning, retrieval, crawling, code execution, report creation, and growing agent tooling in one stack. At the same time, it is still best approached as infrastructure for serious research tasks, not as a universal replacement for chat assistants or no-code automations.

If your work involves repeated web research, source synthesis, and structured output, DeerFlow is a credible tool to test.