Best AI Agent Frameworks for Self-Hosting

A practical comparison of the strongest AI agent frameworks for teams that want to self-host, control infrastructure, and avoid being locked into a hosted agent product.

This guide compares the best AI agent frameworks for self-hosting, from low-level orchestration runtimes to more opinionated multi-agent frameworks. It focuses on who each framework fits best, how much control it offers, and what complexity you are signing up for.

Related Tools

Details

The best self-hosted AI agent framework for most serious Python teams is LangGraph if control, state, and long-running orchestration matter most. CrewAI is easier to approach if you want a more opinionated multi-agent framework with production-oriented flows. AutoGen remains relevant for multi-agent application design, especially for teams already familiar with Microsoft’s ecosystem, though new users should note Microsoft now points beginners toward its broader Agent Framework direction. Mastra is the strongest TypeScript-native option in this list. LlamaIndex is especially compelling when document-heavy agents are central. OpenManus is a useful open general-agent layer for builders who want a more product-shaped starting point.

The biggest mistake here is treating all “agent frameworks” as interchangeable. They are not. Some are orchestration backbones, some are opinionated application frameworks, and some are better seen as open starting points for a particular style of agent.

Who this guide is for

This guide is for teams that want to run their own agent stack, control infrastructure, and keep the option to modify runtime behavior, tools, and deployment patterns. It is not aimed at users who simply want the easiest hosted product.

How the frameworks were selected

The list prioritizes frameworks that are openly available, actively maintained, clearly useful in real builds, and suitable for self-hosted or controlled deployments. Selection criteria included orchestration depth, workflow control, language ecosystem, state handling, tool integration, and how much production complexity each framework can realistically support.

Quick comparison table

| Framework | Best for | Main strength | Main limitation | Skill level |

|---|---|---|---|---|

| LangGraph | Stateful, long-running agent systems | Durable execution and graph-level control | Low-level and design-heavy | Advanced |

| CrewAI | Production-oriented multi-agent workflows | Clear concepts around agents, crews, and flows | More opinionated than raw orchestration runtimes | Intermediate |

| AutoGen | Multi-agent applications and experimentation | Strong agent conversation model and extensions | Evolving product direction can confuse new adopters | Intermediate |

| Mastra | TypeScript teams | Modern TypeScript stack with agents and workflows | Best fit is narrower if you are all-in on Python | Intermediate |

| LlamaIndex | Document-heavy agents | Strong document and workflow story | Best value appears when knowledge workflows are central | Intermediate |

| OpenManus | Open general-agent builds | Open framework approach to general AI agents | Less battle-tested as a universal backbone than larger ecosystems | Intermediate |

The best frameworks, explained

1. LangGraph

LangGraph is the strongest recommendation when your self-hosted system must handle state, branching, pauses, resumes, tool calls, and human approval without falling apart. It is the backbone choice for teams building serious agent systems rather than demos.

Choose it when: flexibility and workflow control matter more than speed of setup.

Do not choose it when: you want the easiest possible learning curve.

2. CrewAI

CrewAI is one of the best fits for teams that want self-hosting plus a more opinionated mental model. Its documentation is clear about agents, crews, and flows, which makes it easier to map from business workflow to implementation than a lower-level runtime. It is a good middle ground between raw control and productive defaults.

Choose it when: you want structured multi-agent automation without starting from graph primitives.

Do not choose it when: you need the lowest-level orchestration control.

3. AutoGen

AutoGen still belongs on this list because it remains an important open framework for multi-agent AI applications, especially with its AgentChat, Core, Studio, and extension layers. The main caveat is directional clarity: the project explicitly tells new users to also look at Microsoft Agent Framework. That does not make AutoGen irrelevant, but it does mean adopters should be aware of the broader Microsoft roadmap.

Choose it when: you value its multi-agent programming model or already know the ecosystem.

Do not choose it when: you want the cleanest long-term signal for a fresh greenfield build.

4. Mastra

Mastra is the best self-hosted choice in this list for teams building in TypeScript. It combines agents, workflows, model routing, and human-in-the-loop capabilities in a modern stack that feels natural in Node and frontend-heavy environments. If your team ships with Next.js or Node-first infrastructure, Mastra is easier to justify than forcing a Python framework into the stack.

Choose it when: your organization is TypeScript-native.

Do not choose it when: your agent infrastructure is already deeply centered on Python.

5. LlamaIndex

LlamaIndex is especially valuable when the agent is only half the story and the other half is documents. Its workflows engine is event-driven and async-first, while the broader platform is strongly oriented around document processing, extraction, indexing, and retrieval. That makes it an unusually good self-hosted path for document agents.

Choose it when: document-heavy pipelines are central to the business problem.

Do not choose it when: knowledge-intensive document workflows are not a core requirement.

6. OpenManus

OpenManus is the most open-ended “general AI agent” option in this list. It is not the safest default for every team, but it is worth considering when you want an open framework layer that feels closer to general-agent behavior than to pure orchestration infrastructure.

Choose it when: you want an open starting point for general agents and are comfortable shaping the rest of the stack yourself.

Do not choose it when: you need the deepest ecosystem maturity or the most proven orchestration runtime.

Which framework is best for which type of user?

- Best for advanced orchestration: LangGraph

- Best for balanced multi-agent productivity: CrewAI

- Best for teams already in the Microsoft agent world: AutoGen

- Best for TypeScript teams: Mastra

- Best for document agents: LlamaIndex

- Best for open general-agent experimentation: OpenManus

Tradeoffs and common decision mistakes

The first mistake is choosing a framework based on hype rather than fit. Self-hosting is not a feature checkbox. It means deployment, observability, permissions, retries, model routing, and operational ownership. A framework that looks flexible in a demo may be the wrong choice for a small team that mainly needs one internal assistant.

The second mistake is picking the lowest-level runtime when the team really wants productive defaults. LangGraph is powerful, but it is not the fastest route to a useful internal prototype. CrewAI or Mastra can get some teams to value faster.

The third mistake is ignoring language ecosystem fit. Python-first teams should be careful before defaulting to a TypeScript framework, and TypeScript-first teams should be equally careful about adopting a Python-heavy tool just because it is popular.

A template can reduce setup time for common workflows such as research routing, CRM enrichment, or document triage. But when self-hosting is the goal, the bigger decision is framework architecture, not the first demo flow.

FAQ

Which framework is easiest to start with?

CrewAI is often easier for Python teams, while Mastra is easier for TypeScript teams. LangGraph usually has the steeper learning curve.

Which framework is best for advanced users?

LangGraph is the strongest choice when advanced control and durable stateful execution matter most.

Which framework is best for teams?

That depends on team stack. CrewAI works well for Python teams that want structure; Mastra works well for TypeScript teams; LangGraph suits teams with strong engineering depth.

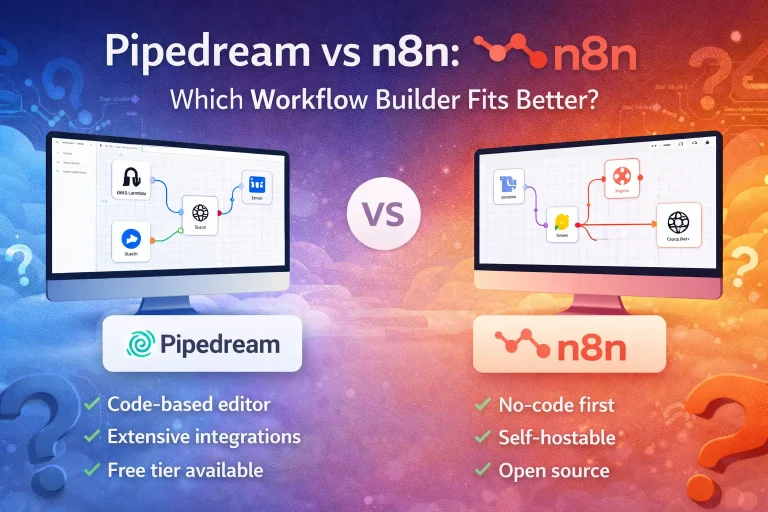

Which framework is best for no-code users?

None of these is truly no-code. They are frameworks for builders, not drag-and-drop workflow tools.

Conclusion

For self-hosting, LangGraph is the best orchestration backbone, CrewAI is the best balanced Python framework, and Mastra is the best TypeScript-native choice. AutoGen, LlamaIndex, and OpenManus each make sense for narrower but important scenarios. Pick the framework that matches your operational reality, not the one with the broadest promise.